[From Bill Powers (2012.12.08.1043 MSRT)]

Bruce Abbott (2012.12.07.1830 EST) –

BA: One of us is confused here

and I dont think its me! (nor Martin)

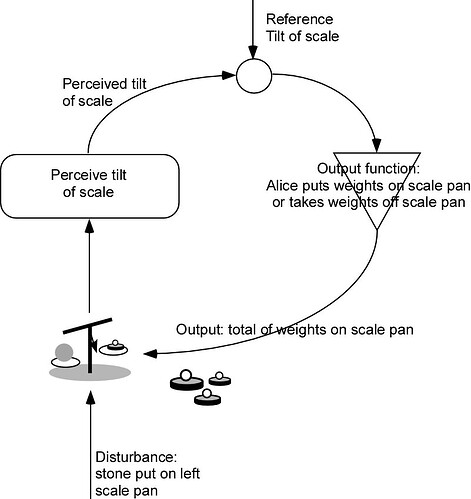

Martin Taylor (2012.12.06.23.52)MT:… Somehow, the information from the disturbance appears at the

output, and the only path through which this can happen is by way of the

internal circuitry of the control system.RM: You are assuming that the only way for information about the

disturbance to appear at the output is for this information to have gone

through the organism.BA: How else could it get there? Magic?

BP: I’m gradually getting the idea (which I wish someone would explain

clearly) that the information-theoretic analysis of a control system

can’t be the basis for designing a control system or explaining its

operation. Martin acts all impatient and disgusted at me for not

understanding this self-evident and obvious fact, but can’t we all just

accept the fact that Bill is old, slow, and ignorant, and try to make all

these obvious facts a little easier for a creaky worn-out brain to

understand? Is all this stuff about information theory actually so

completely worked out that there are no doubts about it at all any more?

If so, why not just explain it all? Or do you have to have a Mensa pin to

understand it?

A point I need to have cleared up is how the information from the

disturbance can appear at the output as Martin, and now Bruce Abbott,

claims it does. How can you verify that?

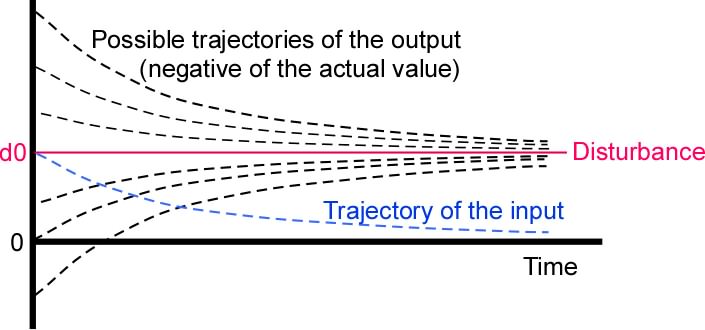

My problem is very simple. The input quantity (or better, the perceptual

signal) is a physical variable with a certain magnitude at any moment.

That magnitude is the sum of effects from two other signals, d and o,

disturbance and output. But the magnitude of the physical variable is

only a single number, and it could be the sum of, literally, an infinity

of effects from other signals in pairs or larger sets. So what can there

be about a perceptual variable, which is known to the control system only

as a single number at any given moment, that gives it a unique

relationship to a disturbance, a sum over many possible causal

variables? If the controlled variable raises that problem, so does the

output variable.

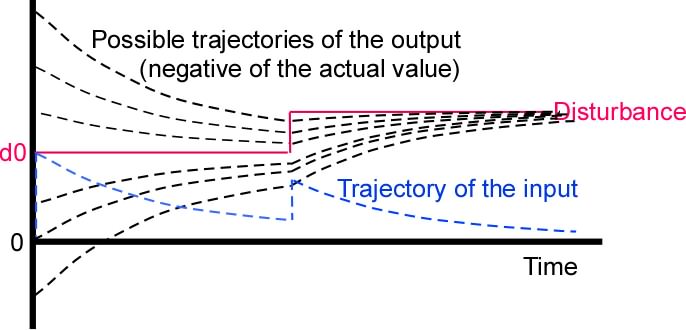

The Taylor/Abbott claim seems to me like claiming that the sweetness

contributed to a cup of tea by a particular sugar cube can be identified

in the final sweetness resulting from all the sugar cubes dissolved in

the tea. Yes, the particular sugar cube’s effect appears in the

sweetness, but given the sweetness, there is no way to work backward to

deduce the presence of any particular sugar molecule or collection of

molecules. So if you think of a control system as a channel carrying a

message from the disturbance to the output, you have to explain how that

message can have any meaning when its elements are taken more or less at

random from two or more other simultaneous messages (d and o).

It seems to me that the physics of message transmission takes precedence

over the information-theoretic and semantic aspects. If you subtract a

signal carrying one message from another signal carrying a different

message, you get a single signal which has only one magnitude at any

given instant. Perhaps someone up on the mathematics of information

theory can tell me what happens to the information in each of the two

input signals – surely the information in the sum of the signals is not

just the sum over the information in each contributing signal. And the

meaning of the output message surely is not the sum of the meanings of

the incoming messages!

Best,

Bill P.