such others as Hein and Held in the 1960s, suggests you are. You can

change the sun angle above the horizon level to whatever you want if

you can run/fly fast enough. But usually you don’t do that. You let

the sun be wherever it is, and the resulting shadow configurations

as whatever results from its current position.

The point of the TCV isn’t what might be controlled, but what Is

being controlled out of the multi-millions of perceptions that you

have at any one moment (using “perceptions” in the PCT sense of a

sensory signal dependent on past or present sensory information).

Just look around you for just those few perceptions of which you are

consciously aware. Is there a bookcase in your field of view? Do you

see wide lighter vertical stripes separated by dark thin stripes

(books and the strip between them). On the lighter stripes do you

see patterns of different colour? Do some of the smaller of those

patterns form characteristic shapes (the letters of words on the

spine). How many of these conscious perceptions from one bookcase

and one book are you at that moment actively and individually

controlling? Or look at a tree in the wind, in which you see

thousands of leaves fluttering in their individual rhythms, showing

their vein patterns at different sun angles and in varying shade.

How many of those fluttering leaf positions and vein patterns are

you actively controlling at this moment? How many trees would you

see if you were in a meadow looking at a woodlot nearby?

Let’s consider some numbers. We have at most a few hundred

individually controllable muscles. All any one of those muscles can

do is get shorter or longer. We do emit chemicals, but not more than

a few tens of truly distinctive ones. Suppose that someone highly

trained is able to command all of these outputs individually and

independently, and could do so with a bandwidth of, say, 10 Hz. That

is to say that the person is able to produce 20 different and

unrelated lengths for each of, say, 250 muscles every second

continuously. That is a control rate of 500 degrees of freedom per

second. That vast overestimate (the true number is more likely to be

nearer 10 or 20 df/sec) is what we have available for control. Any

attempt to control more than that through the environment (as

opposed to “in imagination”) will inevitably result in conflict

inside the control hierarchy.

Now consider the possible perceptions that might be controlled. One

approach is to look at the input sensory system, considering just

orders of magnitude: visual – never mind the hundred million or so

receptor cells – the optic nerve has around a million independent

fibres with bandwidths in the 10Hz plus range; auditory, about

30,000 fibres; tactile, i’ve no idea how many sensory fibres, but

there must be a lot. You get the idea. The input sensory bandwidth

is in the tens of millions of degrees of freedom per second. It is obvious that the true perceptual degrees of freedom is not

given by the number of fibres, since their firings are coordinated

by the coherence of the scenes, soundscapes, and other sensed

aspects of the environment. Optic input is coordinated into gross

features such as moving spots, edges, lines and the like. Directed

motion shape detectors such as these are probably the lowest level

of the perceptual hierarchy, but if the lowest level is, as Powers

would have it, “intensity”, that only makes the problem worse,

because each input fibre conveys an intensity signal (really a

time-differential intensity signal in most cases) in its individual

firing rate.

So, we have at least millions of perceptions available for control

at the lowest level, all possibly changing at a rate above 1 Hz. If

we tried to control them all at the same time, our control structure

would get into the mother of all conflicts, and would probably be

able to control nothing at all. We do have to control only some of

them, leaving most uncontrolled at any one moment – though we could

shift which ones we control to any small subset of the myriad

possibilities. Shifting what we control is a very important part of PCT. It’s in

Chapter 2 of LCSIII, albeit at a very much higher level, where the

degrees of freedom limit is imposed by the environment, not the

organism.

···

[From Adam Matic 2014.03.15.1100 cet]

(A side note: I'm having difficulty

thinking about variables that can be perceived, but not

controlled. I think every variable that can be perceived can

be controlled,

AM:

I don't really understand what is going on

in these experiments with all the smaller and larger control

loops, but if you say they reveal properties of control loops,

then they could also be used in making models. Is that possible

and if so, how do these models perform?

Bruce and I both answered the direct question of how the models

perform, so I’ll try to help with “smaller and larger control

loops”.

We don't need to enquire what the experimenter is controlling for

which the action output is running the experiment. For some reason

the experimenter wants to know something, and to investigate

“wanting to know” opens a whole can of worms. Trying to not open

this can, consider simply asking a question:

Q (Questioner): "Is it raining?"

R (Responder): (1) "I don't want to tell you" or (2) "I can't see"

or (3) “Yes” or “No”

I think you must agree that all these QA pairs are possible, and

that because asking the question is an action, Q must be

controlling some perception for which Q imagines R’s answer will

reduce the error. I’m not going to enquire into that control loop

here, because it brings up some issues relating to B:CP Chapter 4,

and that’s an entirely different thread if and when those issues

might be raised.

Whatever the perception Q is controlling, R's answers may affect

different perceptions Q has about R. These affected perceptions

may or may not be controlled by Q, but that’s irrelevant at this

point. It would be relevant to the continuation of the dialogue,

but we aren’t analyzing that.

Answer 1: Q can perceive that R is not controlling for perceiving

R-self as being cooperative. Q’s perception of raininess is not

affected.

Answer 2: If Q currently perceives R to be controlling for being

cooperative, Q can perceive that R is unable to see whether it is

raining. Q’s perception of raininess is unaffected. Q must

perceive R to be controlling for being cooperative, becasuse if Q

does not perceive this, R might say “I can’t see” because he is

controlling for seeing himself as not helping Q.

Answer 3: If Q currently perceives R to be controlling for being

cooperative, Q can perceive that R is able to see whether it is

raining. If Q perceives R to be good at distinguishing “raining”

from “not raining” states, Q’s perception of raininess is closely

determined by whether the answer is “Yes” or "No’ (or “lightly” or

“pouring” or other modifiers).

Only if Q perceives R to be controlling for perceiving R-self to

be cooperative will Answers 2 or 3 affect Q’s perception of

whether R can see if it raining or Q’s perception of raininess. So

if Q wants to know whether R can see, Q has to at least test

whether R is controlling for being cooperative by asking some

other questions (changing disturbances in the TCV). Q may have to

act if Q’s perception of R’s cooperativeness differs from Q’s

reference value for that perception.

On the other hand, if Q is interested in whether it is in fact

raining, R’s cooperativeness doesn’t matter unless Q perceives R

to be actively deceptive (a short form for perceiving R to be

controlling a perception of Q as perceiving to be true something R

perceives to be untrue – itself a short form for a long

rigmarole).

At the end of all this, assuming Q perceives R to be cooperative

and physically and mentally able to distinguish “raining” from

“not raining”, Q has a current perception of whether it is

raining. Is Q controlling that perception? Not if Q is an ordinary

human, but Q probably IS controlling a perception created by a

perceptual function into which the perception of raininess feeds.

R has served the function of a tool, such as a telescope or a

mirror, which would allow Q to perceive something too distant or

obscured.

So far so good?

Now suppose that before asking the questions, Q knows whether it

is raining, and has done the tests that allow R to be perceived as

cooperative. I repeat the Q-A possibilities from above:

Q (Questioner): "Is it raining?"

R (Responder): (1) "I don't want to tell you" or (2) "I can't see"

or (3) “Yes” or “No”

Answer (1) is very unlikely, so we consider Answers (2) and (3).

In both cases, the answer tells Q only something about R, not

about raininess. If R gives answer 2, or if R says “Yes” when Q

can see that the correct answer is “No” (or vice-versa), Q may

perceive that R is not able to see whether it is raining. If the

Answer is (2), Q may also perceive that R perceives that he cannot

see, whereas if it is (3), Q can perceive that R perceives that he

can see, but that R’s self-perception is misguided.

On the other hand, if R's answer "Yes" or "No" agrees with Q's

direct knowledge of the correct answer, there’s only a 50-50

chance that this occurred because R is actually able to see

whether it is raining. If Q wants to find out whether R is able to

tell the difference between raining and not raining, Q has to try

the same question on different occasions, sometimes when it is

raining, sometimes when it isn’t. Each time R’s answer agrees with

Q’s perception reduces by 50% that chance that R doesn’t see

“raining” differently from the way Q sees it.

It might turn out that when the rain is spotty or a light drizzle,

R’s answers agree with Q’s perception of raininess 75% of the

time, but when the rain is heavier or under a clear blue sky the

agreement is 100%. Q may then perceive that for R to say it is

raining, the rain must be, say, heavier than is required for Q to

perceive it is raining. Q has learned something about how R

perceives raininess, or rather about how R labels different

degrees of rain.

Translate this into the context of an experiment. Q is now the

experimenter, and controlling a perception of R’s cooperativity

and a perception of R’s understanding of the task, Q presents two

noise bursts in quick succession, with a 500 Hz tone embedded in

one of them. R has been instructed to say “Yes” if the tone is in

the first noise burst, “No” if it is in the second, and never to

say “I can’t hear it”.

Q: "Is the tone in the first noise burst"

R: (1) N/A or (2) N/A or (3) "Yes" or "No" (using the numbering

from above, answers (1) and (2) should not happen.

If Q has placed the tone in the first noise burst, but R says

“No”, Q perceives that R did not hear the tone. But if R says

“Yes” that could happen as much as 50% of the time if R did not

hear it, but would happen 100% of the time (assuming R control

perfectly for being cooperative) if R did hear it.

Q asks the question multiple times using the same intensities of

noise burst and tone. Sometimes R’s answers correspond with Q’s

insertion of the tone into the first or second noise burst

(correct answers), sometimes they don’t (wrong answers). For each

wrong answer, Q perceives that R did not hear the tone, but for

each correct answer Q perceives only that R might have heard the

tone. After a lot of questions, Q has a “percentage correct” score

for that particular pairing of noise level and tone intensity. If

the percent correct score is 50% Q can be pretty sure R cannot

hear the tone under those conditions, and if it is 100%, Q can be

pretty sure R can hear it.

But what if the percent correct is intermediate? For a given noise

level and tone intensity, one might naively think that either R

will hear the tone or will not hear it. But the nature of noise

ensures that sometimes the random nature of the noise will make it

seem as though a tone was in the burst and sometimes the phasing

of that frequency band of the noise will cancel a tone that is

actually inserted. The louder the tone, the less likely it is that

this randomness will affect which noise burst is heard as having

the tone. There’s a whole mathematical literature on this from the

1950s and 1960s (to which I contributed), and a mathematically

ideal listener can be defined. The percent correct that would be

achieved by the ideal observer, plotted as a function of the

relative intensities of the tone and noise, defines the ideal

“psychophysical function”. No real observer can get a better score

than that. The point of mentioning it here is to show that there’s

a problem in interpreting percent correct literally as a measure

of R’s ability to hear a tone in a noise burst.

The statistical problem is actually quite easily handled, and

there’s a big literature about it. The only point in mentioning it

is to illustrate that even if there are statistical issues of

interpretation, Q can learn something about R’s ability to hear by

doing something that is directly equivalent to asking a simple

question to determine whether R knows something or is able to do

something. The “larger control loop” is the one in which Q

controls for R to understand the question and to be cooperative in

answering it.

Here I'm going to quote from an old message of mine [Martin Taylor

2012.12.08.11.32] about measurement. It deals with measuring an

inanimate property, in this case the weight of a stone.

-----------start quote--------

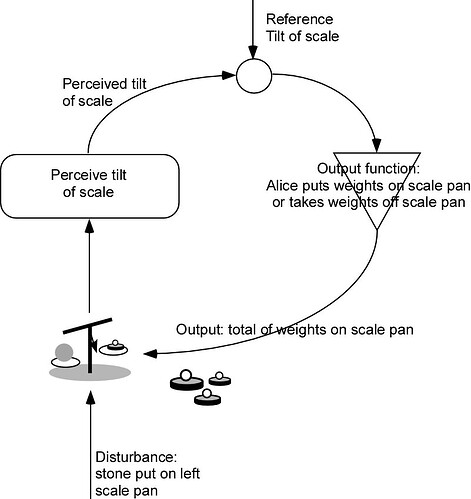

To see why control can be considered to be

measurement, think of this example. Alice wants to know how

heavy is a stone she has picked up. She has a balance scale and

a set of weights weighing 2, 1, 1/2, 1/4 … kilos. She puts the

stone on one pan, and the scale tilts down to the side on which

she put the stone, so she puts a weight on the other pan. The

tilt stays the same, so she adds another weight and the scale

tilts the other way. Alice removes the last weight she added and

adds the next lighter one. She keeps adding and removing weights

until the scale stays level or she runs out of ever smaller

weights.

What is Alice doing? Alice is performing the actions of the

output function of a control loop, looking at the error that is

shown by the tilt of the scale, and altering her output (the

weights on the other pan) until the error is zero. The output,

which is the sum of the weights in the other pan, now is a

measure of the weight of Alice’s stone in exactly the same way

that the output of any control system measures the disturbance

to its controlled environmental variable.

Of course, there need be no human Alice in this story. The

perceptual function signals only the direction in which the

scales tilt, so the error is only a binary value, which could be

called “1” or “0”, “left” or “right”, “too heavy” or “too

light”, or any other contrasting labels. I will call the values

“left” and “right” according to which side of the scale is

heavier. Likewise, there is no need for Alice to provide the

output function. It could be a mechanical device that is

provided with the weights that have values in powers of two

times 1 kilo, with 2 kilos the heaviest. We can assume that the

scale would break if the stone was over 4 kilos!

The output device would load and unload these weights onto and

off the scale pan according to the following algorithm. The

stone is on the left pan.

1. Add to the right-hand pan the heaviest weight not yet tried

(initially, since none have been tried, that means the 2 kilo

weight).

2. If all the available weights have been tried, stop. Else...

3a. If the error is "left" go to step 1 (there is not enough

weight in the right hand pan)

3b, if the error is "right", remove the lightest weight in the

pan and add the heaviest weight not yet tried.

At the end of this process, the balance is as close as the

machine (or Alice) can make it using the available weights.

Anyone who wanted to know the weight of the stone could simply

read out the weights in the pan as a binary number of kilos,

with the units starting at the 2 kilo weight. Those weights are

the current output value of the control system., which, without

Alice, is a perfectly standard control loop.

---------------end quote--------

This "weighing a stone" procedure describes in principle any

measurement, whether done by a human or a machine. In particular,

the entity measured might be some property of an organism. The

“stone on the left pan” might be the ability of a subject to

discriminate

between the brightnesses of two patches. The balance “Left” or

“Right” could be whether the subject’s response is right or wrong.

If the subject was correct, reduce the brightness difference

(remove a weight from the pan), else increase the brightness

difference (add a weight to the pan).

There are two problems with this when the measurement is of a

property of an animate entity. One is that the result is

inherently noisy for a variety of reasons. That’s not interesting

here because there are statistical techniques for reducing the

effect of the noise by altering the algorithm. The other is that

the subject has to be controlling a perception for which the error

is reduced by reporting accurately to the best of her ability –

colloquially, the subject must try to get it right, which we

covered above.

I hope this long-winded explanation at least may guide you to

answering your on question, if it doesn’t do so directly.

Martin