[From Bruce Abbott (2012.12.13.1355 EST)]

Rick Marken (2012.12.12.1835)–

BA: Bruce Abbott (2012.12.12.2030 EST)

RM: To determine whether a particular variable is under control using disturbances to the hypothetical controlled variable to see if there is resistance (per “The Test”) what you should look at is the relationship between disturbances and the hypothetical controlled variable (as I do in my demo of the test at http://www.mindreadings.com/ControlDemo/Mindread.html) rather than the relationship between disturbances and outputs…

BA: True enough, but what does this have to do with an information analysis?

RM : You said " Neither Martin nor I have claimed that the relation between disturbance and output reveals anything about the organism, other than the fact that it is controlling the variable in question", which sounded to me like you use informational analysis to test for controlled variables.

Is this some kind of Orwellian Newspeak? I asserted that IF a variable is under control, THEN information in the disturbance will be passed to the feedback variable. I did not describe a test for the controlled variable, but a fact that is true if the variable is under control.

RM: When you measure the information in the output about the disturbance to a controlled variable you are treating the organism as a communication channel: communicating information about d to o. So you are measuring a characteristic of the organism. But PCT shows that the relationship between disturbance and output has nothing to do with the organism (when control is good). So what you are actually measuring is characteristics of the feedback and disturbance function. You think you are measuring a characteristic of the organism (its ability to transfer information about the disturbance to its output) but what you are actually measuring is the nature of the feedback and disturbance functions of a control loop. You (and Martin) have fallen for the behavioral illusion hook, line and sinker.

BA: If you transmit a message over the phone and I measure the information I receive in the message, am I measuring a characteristic of the communication channel, or of the message received? Clearly, I am NOT measuring a characteristic of that channel (unless the channel imposes some limit on the information it transmits).

RM: So you are using information theory to measure a characteristic of the disturbance, which is analogous to the message when you are analyzing the information about the disturbance in the output. How much do learn about the message in the little demo I just posted? (http://www.mindreadings.com/ControlDemo/InfoDist.html)

Martin and I have already explained this. The information is completely recoverable in your little demo.

BA: I was hoping to gain some insight into how you have come to this conclusion[that you and Martin have fallen completely for the behavioral illusion) by reading your answers to my two simple questions. Sadly, I am still awaiting them.

RM: I thought I answered them. But hopefully this post will give you some idea of how I came to the conclusion that you (and Martin) have fallen (willingly, apparently, since you guys know PCT) for the behavioral illusion.

BA: No, it completely evades my questions. To save you the trouble of looking them up, there they are again:

BA: Now, here’s a question for YOU, if you’re up to the challenge. When control is excellent, with high gain, and the reference signal is constant, how is it that the pattern of variation of the disturbance is mirrored by the pattern of variation of the feedback to the CV, without that pattern being evident in the error signal?

RM: I do remember answering them. You probably just didn’t like my answers. I’ll try again.

I wish you would point me to the post in which you did, because I certainly don’t recall seeing it.

RM: The first thing to understand is that the mirroring of output (which is what I presume you mean by feedback to the CV) and disturbance only occurs when the feedback and disturbance functions are constants and 1.0 (as they typically are in our tracking tasks).

Feedback to the CV is just what is says it is. It’s what comes out of the environmental feedback function and affects the CV in such a way as to oppose the effect of the disturbance on the CV. Because the disturbance and feedback are mirror images (if control is excellent), then whatever information appears in the feedback must have come from the disturbance. There is no other place from which it could have come. There is no logical escape from that fact, because by definition, a variable is under control to the extent that the feedback opposes the disturbance. The question we are addressing is not whether that information is present (it logically has to be), but how it got there.

If you don’t understand that fact, then there’s no point in my going further with this exchange.

RM: The disturbance pattern will be evident in the error signal in this case (though at a phase lag I believe) is there is no noise in the loop.

Yes.

RM: If there is noise in the loop (as there is in normal human controlling) then the the disturbance pattern will not be evident at all in the error signal;

Incorrect. It may be difficult to perceive, but it will still be there, mixed in with the noise.

RM: the closed loop integration filters out this noise so that control is still nearly perfect;

And to that extent potentially removes some of the information in the disturbance. For example, the higher-frequency components may be attenuated or eliminated. (It depends on what one takes to be the information that is being transmitted through the channel provided by the control system.)

RM: the output nearly perfectly mirrors the disturbance despite this noise. The reason this works, I believe, is because the noise is like an additional disturbance to the controlled variable. SO, for example, if the noise, e, is added to the error signal, the output will be o + e. So the input will be o+d+e and the error signal will be r-(o+d+e) and the output driven by this error will net cancel out its own noise (that’s the closed loop filtering process).

If the noise is added to the error signal and does not originate in the CV or in the input function, then it will act like a change of reference level. The CV and perception will change even though the noise has not acted as a disturbance to the CV, thus introducing unwanted variation in the CV and the perception. If the noise is introduced in the perceptual input function (e.g, sensor noise), the system will act to oppose the effect of the noise on the perception while introducing variation in the CV. If the noise is introduced in the CV, then it’s just part of the overall disturbance and the feedback will mirror the effect of this overall disturbance.

BA: Follow-up question: In the case described above, the feedback waveform almost perfectly matches the disturbance waveform. In what sense is it that the feedback waveform tells you nothing about the disturbance waveform? (Nothing = no information in the disturbance waveform appears in the feedback waveform.)

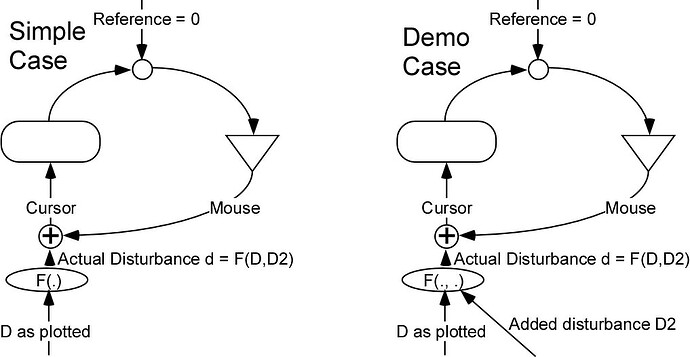

RM: Because the observed output waveform is not necessarily a mirrior of the disturbance. For example,suppose the output waveform that you observe is a perfect sine wave. You say that this means that the disturbance is also a sine wave, but 180 degrees out of phase. But it could be that the disturbance is a sine wave that is 0 degrees out of phase with the output. This would be true if, unknown to you, the feedback function were = -1. Or if the disturbance function were -1. Or the disturbance might a sine and cosine wave that add up to produce the total disturbance to the CV. There are many different reasons why you are seeing a particular output waveform other than that it is a mirror of a disturbance variable. Consider again, for example, my area/ perimeter control study. There we see a change in the output waveform with no change at all in the disturbance variable; the change s a result of controlling a different perception.

In each of these cases, the feedback to the CV must be a mirror image of the disturbance if control is excellent. If it isn’t, then control isn’t excellent.

Furthermore, I specifically stated that your analysis was to be applied to the simple case I described. Your response was to bring up some other case in which you believe that the output would not mirror the disturbance, thereby avoiding answering my question about the case at hand.

RM: So these are the reasons why I would say that, in general, the output of a control system contains no information about the disturbance(s) to the variable being controlled by the system.

RM: Hope I passed the audition.

No, you failed miserably. But I still hold out hope for you, if you really want to understand. It’s not that difficult.

Bruce