Im sorry to jump in so late. I must admitt that I didnt read everything precisely, so maybe I didint understand something right. But I realy couldnt avoid some statements, which were quite clear to me

BP :

I know you admired Ashby’s work in the past. For a long time, so did I, until I worked out my own understandings of control processes and saw all his mistakes. But we can’t ignore those after we know about them, and I long ago saw Ashby as reduced to the status of a highly intelligent and very clever dilettante. It’s still easy to find things in his writings to admire, but he spread more poison than fertilizer and it is still contaminating people.

HB :

I think this is not necessary toward your long time admired teacher. If you admired him for a long time (at least I tried to conclude that could be at least till 1998), then I think your statement is not on your level.

If you needed a long time to work out your own understanding of control processes to see all Ashbys mistakes, then I think your knowledge based on his knowledge with improvements (eliminating mistakes). Thats the way I understand it. So I think that his work was the bases of your working out your own theory. I concluded also that he was in some sense your teacher. And I think he was a good teacher, because student exceed his knowledge.

For me he was a pioneer in reserching design of a brain on the bases of ultrastability with feed-back, and I think we should respect him for this superb vision, as that was quite long time ago (1952). Its easy to look upon some work 60 years ago through the eyes of progress and say well how stupid diletanttes they were in that time.

Can we really look upon history with such a criticism ? Can we really look upon the history which put some supporting stones in development of human knowledge, as something that spread more poison than fertilizer and it is still contaminating people. And criticiser was poisoned and contaminated with the same knowledge for a long time.

I look more on Ashby as somebody who probbaly opened your, our eyes, as you were blind if I understood you right.

BP (23.12.2010) :

Something is coming together that is making sense of some ideas I

have resisted for a long time. It has to do with the brain’s models

of the external world. From the way I have seen those models proposed

by others such as Ashby and Modern Control Theory adherents, I have

thought they were simply impractical, calling for far too much

knowledge, computing power, and precision of action – as indeed they

are and they do, as they have been presented.

But those ideas may nevertheless be right. Some of those other blind

men standing around the elephant are perhaps only a little

nearsighted, and are seeing something going on that looks fuzzily

like modeling, but there’s something funny about it so it isn’t quite

how it seems from this angle or that. This particular blind or

nearsighted man writing these sentences has not seen models; he has

seen a hierarchy of perceptions that somehow represents an external

world, and a large collection of Complex Environmental Variables (as

Martin Taylor calls them) that is mirrored inside the brain in the

form of perceptions.

Briefly, then: what I call the hierarchy of perceptions is the model.

When you open your eyes and look around, what you see – and feel,

smell, hear, and taste – is the model. In fact we never experience

ANYTHING BUT the model. The model is composed of perceptions of all

kinds from intensities on up.

HB :

So I think its not right that you try to underestimate Ashbys contribution to understanding of how brain work adaptivelly, as you see, you are still discovering details of his elephant vision.

Im repeating all the time that your theory is very good and your idea very promising, but I have in mind also the range of organization of control units, not ONLY in analyzing one control unit to infinity and in all possible figures. I think you have to move somehwere, as time is passing very quickly.

Also Carver and Scheier are writing about your theory as partly useful, and that you didnt do enough on hierarchy (as probably they are the only psychologists, who seriously grab your theory outside CSGnet).

Maybe they didnt understand you quite well. As you maybe didnt whole-understand Ashby.

My oppinion is that you will never be able to answer what is life, in which control system has reamarcable part, if you will not start to work seriously on organization of control from genetic source to system concepts.

I tried a little to play with this hierarchy (as it seems that nobody else wants, as you and Rick are mostly playing with details in one unit or unit as a whole), and I discover some interesting things.

One is that I couldnt work only with your principles in detail. I also tried with Ashbys diagram of immediate effects and it didnt work either. Then I tried with both theories (all principles I could find) together and I must say that I noticed some progress. Till now its even working

I think that Ashby had a great vision and he was really very inteligent and smart man, not just very clever dilettante. His central question as I understood him was :

The origin of the nervous systems unique ability to produce adaptive behavior or

simply how brain produce adaptive behavior.

It seems to me, that you and Rick are trying to analyse something else. I have impression that you are researching control unit in detail with two or three experiments (mostly with tracking experiment). It also seems to me that you are not progressing to find out what is life or what is the difference between alive and dead horse as Ashby used to compare. Its look like to me that you want to analyse control in detail and you are simply overlooking the problem of the way control units are organized as the main problem of life.

I see Ashbys work as pioneer work. If that is not so and you think it wasnt, Id be greatfull to you if you show me who was in his or past time to wrote about design of the brain on the bases of ultrastability. Im still wondering why would he wrote a book if everything was so clear about questions of brain and life.

The concepts of organization, behavior, change of behavior, part- whole, dynamic system, coordination (!!!), equilibrium, local stablities, etc. as I see it, are still in the game, because I dont see how you solved some of this problems in control processes as whole organism.

His whole vision of eliminating intrinsic errors is by my oppinion still right on high abstract level. Yours failed in some parts (figure on page 191 in B:CP, 2005). Although you both used double feed-back (what I think is his idea) to eliminate intrinsic error what by my oppinion is a little misleading, I think his is better on some generalized level.

But on the level of structural abstraction, you are by my oppinion absolutely dominating with your inovation of organized same control units and perfect analyses of one control unit. But as I see it, you still dont answer how intrinsic error is eliminated. Your model (picture on 191, B:CP, 2005) doesnt show that. And I think Ashby managed that but with some weekness in some parts which you succesfully improved. Whatever. I can work only with both theories. Single theory doesnt offer me enough conceptual support for building the whole picture of organisms functioning. Both do.

So conversations that ends with accusing and defending who is S-O-R and who is not seems to me unusefull. Also unusefull seems to me critisizing and trying to eliminate historical knowledge of some eminent people.

I dont see the problem on the side of Martin and Bruce. But I must admitt Im wondering why they were “attacked” again. And Martin T. helped me with his post. I quite agree with him.

People who have maybe some different ideas, which could show some other perspective of how nervous system and organism as a whole works in environemnt. I think about Bruce A. and Martin T. that they are serious and respect worth people. I realy admire both of them for their past contribution to PCT and Im thankfull to both of them for quite long conversations I had with them. I learned much.

By my oppinion you Bill with your own understanding of control processes started the avalanche and the main things has to be done yet. Why loose energy on discussions with no end whether some knowledge has to be included of not. We can discuss it but with patient and mutual respect, from different perspectives and on different control level in organism.

As I noticed before, my oppinion is that you are the pionieer of organization of control units, and next generations I’m sure will undoubtelly improve your ideas, as Ashbys ideas was improved by you and some others. I see Maturana as one of them.

But what you didnt do is to create the whole picture of how organism is working with horizontal and hierachical organization of control units. You are good at details about control unit and some on organization of control units (I can easily put my hat down), but the main point is missing. How all your knowledge works in natural environment interacting with organisms and their inside organization ?

So I see Ashby as pioneer on the field of control of aims, goal-seeking behavior to minimizing displacement in essential variables (ultrastability), design for a brain,etc, as I see you as pioneer of some different perspective to problem life “control”. And I like also Martin T. perspective and Bruce A. perspective and even Ricks, when he is conversating normally.

But I think also, if there wouldnt be Ashbys work, who knows whether you would come to the point of your own understanding of control processes in organism after your long admiration of Ashbys work and whether we would come to the point of our understanding of control processes as we understand it today. Im thankfull to both of you.

But I still hink its right to seek also other ways to find more and more possible perspectives which will enable more solutions to problems PCT have. And Bruce and Martin, as I see it, tried to show that. At least to me. They are maybe not satisfyed with all of PCT truth and they try to seek for more possibilities.

So Im thankfull for a thoughtfull debate to all of you, also to Rick (in certain range)J. But I rather see if you stay in the range of arguments, not at songs and other attempts of degradations (control) as Rick did. Could this be really the final point of PCT conversations ? To prove who is S-O-R ? Does such a conversation lead anywhere ?

I like Erling Jorgensen’s interpretation of such a debate :

My background is not mathematics. While I have a Ph.D. degree, I always

have to follow closely those who can translate the mathematical concepts

into more intuitive ways of understanding them. However, there are some

points, & indeed fallacies, that have only been lightly touched upon in

this discussion. And I need to see if my understanding matches that of

others.

Why not give more concern to all possible understandings and perspectives of the problem you were talking about. I think this could be than multicultural, multilingual, multi-conceptual approach which could made everybody reacher and happier as they would understand whats conversation about and they can contribute. Isnt that one of the goals of PCT ? Isnt that one of possible ways to improve PCT knowledge and spread it among people ?

Best,

Boris

···

----- Original Message -----

From:

Bill Powers

To: CSGNET@LISTSERV.ILLINOIS.EDU

Sent: Wednesday, December 05, 2012 7:03 PM

Subject: Re: Ashby’s Law of Requisite Variety

[From Bill Powers (2012.12.05.0950 MST)]

Bruce Abbott (2012.12.05.0740)] Bill Powers (2012.12.04.1723 MST) --Bruce Abbott (2012.12.04.1955 EST)

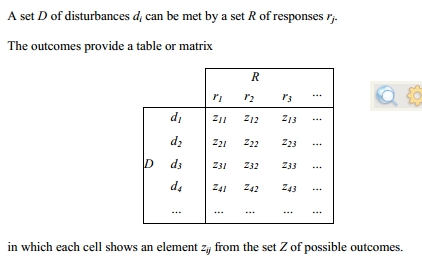

BA previously: I don't find anything in Ashby's paper about behavior being noisy and statistical. The argument is simply that noise in a transmission channel (which could take the form of nonrandom variation such as that induced by channel cross-talk, by the way) is logically equivalent to disturbance-induced variation in a controlled variable. Thus, an isomorphism exists between Ashby's Law of Requisite Variety and Shannon's Theorem 10. BP: But in a noise-free channel, that theorem is irrelevant, isn't it?BA: Youre missing the point. Perhaps another example will clarify. Assume that you have a control system with a continuously varying reference signal. In addition, a continuously varying disturbance is acting on the controlled variable. The perceptual signal emerging from the input function varies as a function of both. It represents the desired values of the perceptual signal, plus noise added to that signal by the disturbance. Thats the noise were talking about. Were not talking about any other source of noise in the system.BP: OK, I see how this applies to the first part of the paper. You’ll notice that the elements of Ashby’s analysis are not continuous variables, but “events” which either occur or don’t occur. This is on page 2:

This is the traditional way of defining probabilities, information, uncertainty, and so on. The variables are occurrances which either happen or don’t happen. If you count all the things that might happen, perhaps with accompanying relative probabilities, you can use that number to compute the probability that a particular one will happen. But nothing ever happens partway.

The main effect of using this discrete-variable approach is to bypass the problem of quantitative accuracy. Ashby does this a lot, by giving examples in which the variables have values that are small integers or just logical states. If the reference signal is 1 and the perceptual signal is 2, the error, r - p, is -1, which in one form of controller should lead to an output effect of -1 that will reduce the perceptual signal to 1 and the error to 0, giving perfect control. Of course no physical system works that way. The example on page 3 is set up and discussed that way, with the outcomes being rated either Good or Bad.

BA: In a control system, we have access to the reference signal. We can subtract the perceptual signal from the reference signal; what is left is the disturbance waveform, otherwise known as the error signal. The error signal drives the output, which negatively feeds back onto the controlled variable to oppose the effect of the disturbance on the controlled variable. If the output of the system perfectly cancelled the effect of the disturbance, the error signal would never vary from zero. Consequently, there would be no variation in the output to cancel out variation in the CV due to the disturbance. Conclusion: perfect control in such a system is impossible.BP: This is the conclusion you reach when thinking in terms of discrete variables or small whole numbers. As you pointed out in yesterday’s post, however, the concept of loop gain is missing from this approach. When you take loop gain into account you find that you can get the error as small as you please. Also, only simple proportional control is considered. If the output function is an integrator, the output variable becomes a time series which converges toward a final value of zero error. How rapidly it converges depends on the gain of the integrator. Strictly speaking, control is still not perfect until you’ve waited an infinite time. But if the first iteration corrects 90% of the error, the 10th one reduces the error to 1% of its initial value, and so on, and at some point the error becomes smaller than the system’s own internal noise level.

BA: In information theory, the channel that conducts the error signal to the output function conveys information about the disturbance. In a perfect control system, that channel is blocked with respect to the flow of information because the error signal never varies.BP: That is an artifact of the method of analysis, and requires ignoring variations in the reference signal. When you neglect all real sources of disturbance in the controller itself, and measure continuous variables as small integers, and assume perfect ability to convert signals into other signals or physical effects of the correct magnitude, of course you come out with perfect control using Ashby’s model, and the problem of zero error (not) producing the right output in the closed-loop model. But Ashby’s model applies only in an imaginary idealized environment, and for control systems built of imaginary perfect materials, with computers of infinite speed and precision inside them.

In the real control system, you can solve the equations to get the steady-state value of the variables, and you find that the system ends up with a specific amount of error which can be reduced to the limits of our devices to detect errors by using high enough loop gain and taking pains to stabilize the loop (a problem never mentioned by Ashby).

BA: Information theory simply offers another way to analyze the operation of a control system. Its just another tool. Whether its a useful tool in this context depends on the purposes of the investigator.BP: I very strongly dispute those claims in relation to control systems. It is a blunt tool that works only with imaginary systems in an imaginary environment. It comes up with very clear conclusions, which is a strong reason to reject it because the conclusions are wrong for any real control system.

I know you admired Ashby’s work in the past. For a long time, so did I, until I worked out my own understandings of control processes and saw all his mistakes. But we can’t ignore those after we know about them, and I long ago saw Ashby as reduced to the status of a highly intelligent and very clever dilettante. It’s still easy to find things in his writings to admire, but he spread more poison than fertilizer and it is still contaminating people.

His generalizations and “laws” are banalities or trivial truths when translated into simpler engineering language. Of course the output of a control system has to be able to vary enough, and rapidly enough and in the right ways, to counteract the effects of the disturbances that are most likely to happen. Who on Earth would ever have thought otherwise? And who would ever have the nerve to dress that quite obvious fact up as a law with a fancy name?

There have been many people like Ashby in cybernetics and allied occupations who are too smart for their own good. They are used to coming up with their own explanations of things like control systems, and are so confident that they never actually learn how others solved the same problems. They reinvent wheels and often miss the obvious simplifications that are possible. A lot of them simply love complexity, instead of being made suspicious by it that they have gone down an unnecessarily twisty path.

Best,

Bill P.