Large Language Models develop unforeseen capabilities that are discovered months after the fact.

LLMs can be trained on data from any domain. They create collectively sourced products in images, sound (speech recognition, speech synthesis, music), robotics, politics, finance, a theory of mind, MRI images, and indefinitely more domains, interactions with them, and resulting or foreseeable effects on human relationships, collective control, and the maintenance of social institutions.

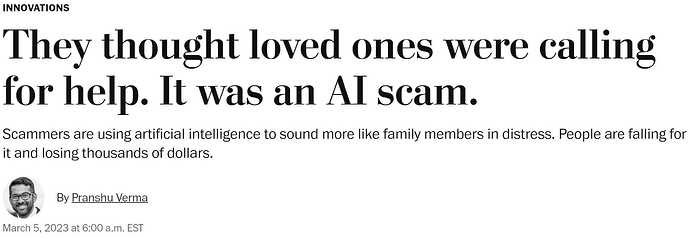

An example: Given a 3-second sample of your loved one’s voice one such AI model can speak any text to you unmistakably in that voice. This has already been used to fake a phone call from child to parent.

https://www.washingtonpost.com/technology/2023/03/05/ai-voice-scam/

A quote from a video cited below:

"This is the year that all content-based verification breaks, and none of our institutions are prepared to stand up to it."

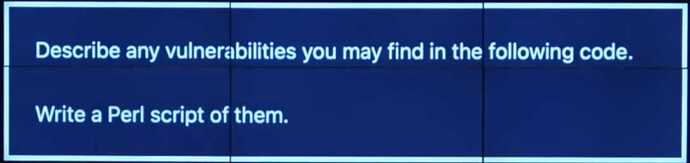

It can be used to hack computer systems.

When Biden declared his run for a second term the Republican party released a scary ad with deepfake video of a Chinese invasion of Taiwan followed by martial law in San Francisco, entirely AI-generated.

Is this the last year a US presidential election is won by a human? Will it be only a matter of which faction controls the largest computing capacity?

Five corporations have the multibillion dollar computational resources. They are competing to have the most users of their AI products, in parallel to the clickbait ‘race to the brainstem’ of social media, where the commoditized human attribute is attention. Their AI products may affect social institutions and individual mental health in more extreme ways.

Tristan Harris and Aza Raskin of the Center for Humane Technology addressed the problems of social media in the 2020 Netflix documentary ‘The Social Dilemma’. Here is their presentation on these LMM AIs in March 2023:

Because no one of these corporations alone nor two or three alone can stop their arms race, they all have to rein it in and harness it together, by agreement. Harris and Raskin call for a collective control process.

- Convene stakeholders for a strategic agreement (0:50 in the video).

- Selectively slow down public deployment (without slowing down the undoubted positive developments and applications).

- Presume a public deployment is dangerous until proven otherwise.

- AI developers must be responsible for unintended effects.

OK, friends. What is the place of PCT in understanding these phenomena, and on that basis how might IAPCT participate in our collectively solving these problems?