[From Bruce Abbott (2010.01.02.1400

EST)]

During the 1930s my grandfather

experimented with a system to reduce noise in the radio receivers of the day.

His idea was to use two receivers, one tuned to the frequency of the signal and

the other to another frequency, just off the main frequency, which presumably

would receive much of the same relatively broad-band noise. The phase of the

audio signal from the off-frequency channel was inverted and summed with the

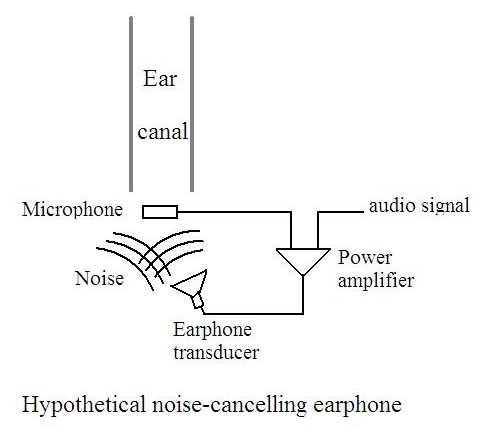

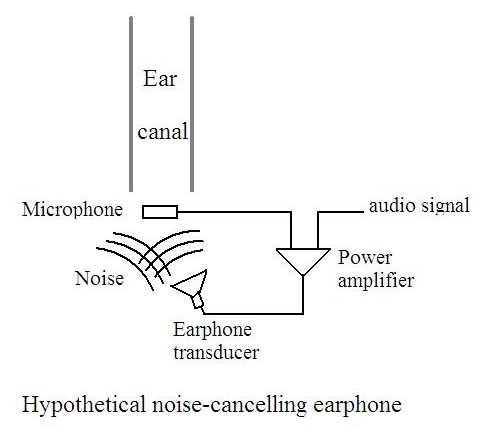

audio signal of the main frequency. Much the same thing is done today in “noise-cancelling”

headphones: a microphone picks up external sounds and, after suitable inversion

and scaling, beats this signal against the one being heard inside the

headphones. (The latter includes the intended audio plus external noise that

leaks through the insulation of the headphone ear cups.)

In both cases, we have noise

mixed in with the intended signal. A sensor picks up what is intended to be the

noise alone. If we label the noise as “disturbance,” then these

systems sense the disturbance to the signal and use that signal to create an

opposing action that cancels out the disturbance. Their ability to function

well depends on adequate sensing of the disturbance and correct calibration

(e.g., the inverted noise signal must be scaled to have the same magnitude as

the noise component of the main signal and opposite phase).

These seem to meet the

definition of feedforward control as given in engineering. Or am I missing

something?

In the case of the

noise-canceling headphones, I can envision a system that would employ feedback

rather than feedforward. A microphone inside the cups of the headphone

would pick up the sound, which would include the audio plus any noise leaking

through the ear cups from outside. This signal could be compared to the

intended audio signal (reference signal) and output adjustments made to

diminish the difference. But this would seem to require a rather complex

device, one capable of adjusting a wide range of frequencies simultaneously,

each to the exact amount required depending on the amplitudes of the various

frequencies present in the disturbance. In this case the feedforward system

would appear to be simpler and more effective.

Bruce