[From MK (2015.07.31.2340 CET)]

···

----

Subject: Re: Intrinsic reference conditions

[From Bill Powers (2007.12.24.0910 MST)]

Martin Taylor 2007.12.23.23.43

I guess I wasn't clear. An "error" in perceptual control

theory is the difference between a reference value and a

perceptual value in a control unit. The reason there is no

"intrinsic error" is that there is no reference value for an

intrinsic variable. If there is nothing for the value of a

variable to be compared against, the concept of "error" does

not apply.

[My comment continued]

On awakening this morning I got out B:CP and looked through it,

with some trepidation, for the discussion of homeostasis as it

relates to intrinsic reference signals and error signals.

Sure enough, it isn't there. Neither "homeostasis" nor "Cannon"

appears in the index nor, as far as I can find, in the text.

I was so focused on the connection between intrinsic error signals

and reorganization that I simply passed over the homeostatic

systems in which the reference signals and error signals appear.

I'm sure I must have written many times about homeostasis (I know

I reported to CSGnet upon discovering Mrosovsky's "Rheostasis"),

but I can't find anything about it in B:CP, even though I was

quite aware of that subject at the time of writing and considered

it to show a level of biochemical (and autonomic, as others have

reminded me) control systems. I can see that if another edition of

B:CP ever appears, it is going to require an added chapter on this

subject, or a large revision of the chapter on learning and

reorganization. I tell you, discovering a blind spot that large is

very painful.

One painful aspect of it is remembering how, when Gary Cziko wrote

about Bernard and Cannon in Without Miracles, I wondered why he

didn't credit me with applying control theory to homeostasis. The

reason is now quite clear: I didn't. I only thought I had done so.

So: my somewhat perfunctory mention of the possibility of a lack

of clear communication on my part turns out to be a very likely

explanation for why you. Martin, and probably many others don't

realize that the intrinsic control systems of which I spoke were

the same homeostatic systems that Bernard and then Cannon

recognized, and that led Arturo Rosenbleuth, a student of

Cannon's, to bring this subject to Norbert Wiener's attention,

thus giving rise to cybernetics. My only addition was to propose

that large enough error signals (how I wish I had termed them

homeostatic error signals) cause reorganization of the behavioral

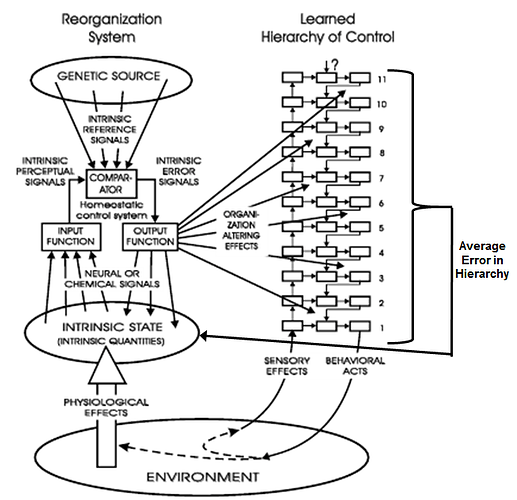

systems to begin. In my diagram of the relationship of the

reorganizing system to the behavioral hierarchy (Fig. 14.1) I show

ONLY the reorganizing effects of intrinsic error signals. The gap

left by omitting the local output functions that normally correct

intrinsic errors is now the most prominent feature of that diagram

in my mind. How could I not have seen what I was leaving out?

Dag Forssell, since it was you who drew the latest and clearest

version of Fig. 14.1, perhaps you could undertake to add those

missing output functions that convert intrinsic error signals into

physiological effects in that part of the diagram. But read on

first.

Writing this, I now realize that the "ignoration" of the

homoeostatic control systems was more than a simple omission. I

failed to see a principle that becomes obvious when the

homeostatic systems are added in all their glory as complete

control systems. When the physiological loops are added, we see

that reorganization is triggered by excessive and prolonged error

signals in somatic control systems -- just as it is triggered by

excessive neural error signals in the behavioral systems of the

brain. This quickly brings in another consideration that I have

looked at and mentioned, which is that "pain" in many cases (if

not all) is simply an ordinary perceptual signal that is excessive

in magnitude, meaning that it is causing very large error signals.

Any perception, when carried to an extreme magnitude, is painful -

- we try very hard to make it smaller. We can now say that any

error signal, whether in a biochemical, autonomic, or behavioral

control system, will, when large enough and protracted enough, be

experienced as pain and will cause reorganization to begin.

This tells us that the reorganizing system must be a distributed

system that brings reorganization to all levels of control systems

from bottom to top. At the level of DNA, it exists in the form of

repair enzymes. The immune system is a higher-order version of

repair enzymes. Reorganization exists at every level and acts

locally to that level. So we arrive at the question, "what about

amoebae?" And the answer, too.

Reorganization is simply an aspect of any level of biological

control systems.

And that brings up a realization delayed by some 35 years because

of that blind spot: every level of organization has ITS OWN

reorganizing system that senses excessive error and applies its

reorganizing actions to that level. So the diagram of Fig. 14.1 is

probably wrong. It is not error at the physiological level, but

only error at the behavioral level, that leads to reorganization

at the behavioral (neural, brain) level. Reorganization does

result from excessive error at the homeostatic level, but its

effects happen at that level. If we reorganize our behavior

because of physiological problems, we do so only because those

physiological problems are not corrected by reorganization at the

physiological level, and lead to excessive errors in the

behavioral systems. It is the latter kind of error that leads to

reorganization at the behavioral level. So now we see that every

new level has to deal with whatever errors the levels below it

can't handle, with reorganization happening just as control of any

kind happens: locally.

I don't know how well this revision will survive aging, but it's

pretty clear that it wouldn't have occurred to me if you, Martin,

hadn't made the inflammatory proposal that there are no intrinsic

reference signals.

Best,

Bill P.

----

M