[Martin Taylor 2013.04.02.10.30]

Since I have been silent on the question of perceptual uncertainty for a couple of weeks, I thought I should explain why. It has nothing to do with lack of interest, as Rick surmised. In fact, the reason is the exact opposite. I found I was devoting so much time to the question of identifying the controlled variable in my little example of coding in the "Processing" language that I was not leaving time to do: FInd the paperwork for this year's taxes; Prepare an abstract for a workshop on PCT in computer interface (I don't think I will be going, since it is just one day in the UK); dealing with stuff for my NATO group; actually programming the demos I want to use in part 2 of my tutorial; programming the kind of tests for the controlled variable that I had suggested were required in the earlier part of this thread; and finally, programming the simulation that I had agreed with Bill were required to demonstrate the effective equivalence between the width of a perceptual tolerance zone and decreased apparent loop gain (this last is the reason for the tests that I had suggested in testing for the controlled variable.

Most of the above reasons for delay still hold, but I am writing this now because I will be away for the next week or so, and I didn't want you to think I had forgotten you.

Now to specifics:

[From Rick Marken (2013.03.18.2130)]

Rick Marken (2013.03.17.1510)--

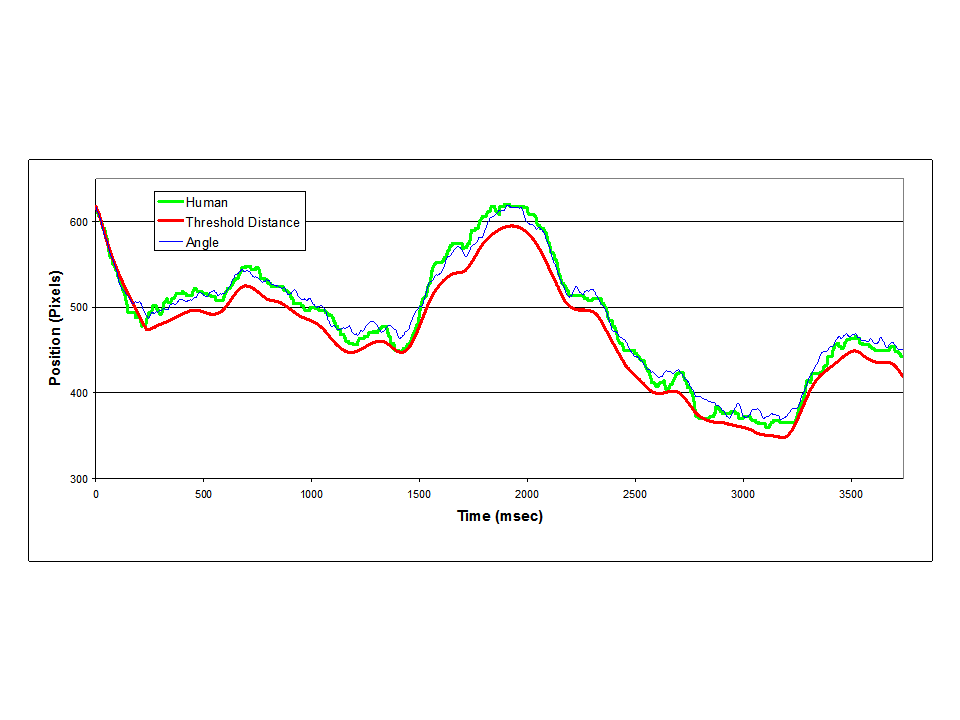

RM: Here are two figures that summarize the results of my analysis of some

data from Martin's pursuit tracking task where the main independent

variable was the horizontal separation, s, between cursor and target...

I can think of two possibilities; one is that system noise is amplified as s

increase; the other is that the same level of existing noise in the system

"shows through" in performance more when the loop gain goes down

(as it does as s increases). This will be the next thing I try to test with the models.RM: Well, not much interest in this apparently. But I find it

fascinating. I did try adding a fixed level of noise to the simulation

(a random number between -.4 and .4 that was added to the input

variable of the v and a model). As expected the noise degraded

performance equally for all separations for the v control model; but

the noise degraded performance more as separation increased for the a

control model.

There are two problems with this approach:

1. If you think of this as a model of what is happening within the human, you have a perceptual value that jitters all over the lot, but that's not what one actually perceives. What one perceives is that small changes in the relative positions of the target and cursor make no difference in the perception. If the orientation of the two is held constant, the perception does seem to change over time, but only slowly, not in a jittery manner. The fluctuations may well represent noise, but it is noise that is averaged over some long time period. Changing the perceptual relation to the sensory input randomly frame-by-frame does not model the perceptual experience very well.

2. The range of variation (of the relative position of the cursor and target over which one cannot see that they are not horizontally aligned) varies linearly with the horizontal separation, according to the measurements included with the original data. Even if the addition of frame-by-frame noise variation works as a model, the noise amplitude should range from about 1 pixel when the cursor and target ellipses touch to about 4.5 pixels at the widest separation.

I had earlier suggested using a tolerance zone for the perceptual signal, using the expression

p = sign(qi)*(max(abs(qi)-tol, 0)

where qi is the environmental input variable (typically qo +- d, +- depending on whether it is compensatory or pursuit tracking), and tol is the half-width of the tolerance zone. A tolerance zone does reflect the perceptual experience, and is applicable to cases in which the perceptual uncertainty is due to missing information rather than to noise-induced variation in a value (e.g. the bus-arrival time in my example -- if you know that buses come every 15 minutes, you know that the next but will come at some time between zero and 15 minutes from now, but if you don't know when the last bus came, that's all you do know, and if you are controlling a perception of arriving at the bus stop just before the bus arrives, that 15-minute period is the tolerance zone of the controlled perception.

···

On 2013/03/19 12:29 AM, Richard Marken wrote:

--

[Aside: A real-life example of the opposite situation happened in Denmark. We were visiting a friend in a suburb of Copenhagen many years ago, and were going to have dinner with a friend who live further out on the nearby rail line. The train was scheduled for time X, and we were a few blocks from the station. About 10 minutes before train time, I was getting nervous about missing the train, but our host said there was no problem, because it takes 7 minutes to reach the station, and we would leave in 3 minutes. We did, and we climbed the station stairs as the train pulled in. It opened its doors as we arrived at the platform, and we boarded without breaking step.]

--

I thought I had presented a sufficient analysis of why changing the value of width of the tolerance zone emulates changing the value of the loop gain, but Bill considered that a simulation was needed to demonstrate it, and I agree. I'm trying to include that in my programming using the Processing language.

----------------

I don't want to burden you with problems, but in case you want to pursue the Processing language for further work in PCT demos and experiments, I thought it might be of some use to describe my experience as a novice in the language, though not in low-level programming (I started before there were even such things as assemblers, let alone compilers).

Firstly, if you get things right, Processing makes it easy to do a lot, even in 3D, and there are many very useful libraries. The output seems fast and effectively platform-independent, though I know no benchmarks to quantify that subjective impression. I'm using a GUI library called ControlP5, which gives you a lot of the standard controls and allows you to roll your own if you don't like any of the ones provided.

Secondly, the IDE is not very helpful in debugging. I grant that I may have missed possibilities that actually exist, but so far, the only way I have found to debug is by printing out messages to the embedded console, and by programming a "wait(milliseconds)" command to give you time to look at the result if the problem could be in a fast loop. The messages at compile-time (effectively that is the same as run-time) are mostly useful, though one or two have left me puzzled for a few minutes. The most difficult aspect of debugging is that I have found no equivalent of a "break-point and continue" that would allow one to query current variable values, for example. This lack has meant that I have spent many hours tracking down such simple errors as a minus where I should have had a plus, or a scaling factor applied twice or wrongly multiplying where a division was appropriate. Such errors should be fixable in minutes by stepping along breakpoints rather than in the hours or days it has taken me to find some of them using my novice-level understanding of the language.

----

When I do have a working version of the program I'm trying to build, I want to remake it in LiveCode with the Allez-OOP Object-Oriented extension programmed by Allan Randall. It would be a good test of the relative good and bad points of the two systems.

For now, I'm going to keep working on my program and not contribute more than passing comments on CSGnet. There will probably not be many of those over the next week or ten days.

Martin