This reverberation from 1979 is very nice to see, Rick. Thanks!

You go directly to the problem that becomes more acute the higher we go in the hierarchy in the search for controlled variables: identifying “variables … which someone looking at this research might find interesting.”

Bill’s first response to this goes only as far as the training of students in a science: In “a basic course in experimental physics … the laboratory work consists mostly of re-running basic experiments with light, sound, force, and motion. Nobody is surprised at the results; that’s not the point. The point is to learn how to get those results yourself, and understand what they mean.”

The simplicity and obviousness of a phenomenon becomes a problem communicating with an audience who already have a simple and obvious explanation for their perception of that phenomenon. For them to sustain that explanation requires imagined perceptual input and/or inattentional ignorance (see below). And there is also an affront to dignity. Experts find it unpleasant being required to be students learning the basics by running simple and obvious experiments.

Bill’s second response goes to the fundamentals that students learn by running lab exercises such as those in LCS III. “All the fundamental quantities of physics are experimentally determined. Psychology needs a base like that.” You asked in your letter isn’t this a matter of psychophysics? If you can perceive it then you can have a reference value for it and control it accordingly (as best you can, i.e. even if your outputs and feedback function are ineffectual). And if you can, we assume that any human can “short of sensory or maturational deficiencies in the subject or experimenter”.

Psychophysics suffices, alas, only for the lowest levels of the hierarchy, and it is at higher levels that we find “variables … which someone looking at this research might find interesting.”

Bill suggests displaying a triangle and a circle and asking the subject to control equivalence of linear span, area, and (perceiving them as views of a sphere and tetrahedron) volume. It is easy to write a computer simulation that calculates linear extent such as length, area, and volume of an imputed 3-D configuration. Probably no neuroscientist believes that the brain quantifies length, vertices, and radius and calculates e.g. b*h/2 or retrieves the value of pi from memory and calculates squares or cubes. And note that if the brain did this we would have no need of such formulae. A model that replicates the kinds and degrees of error observed in human estimations of these values might correspond to what the brain is doing.

Even without “sensory or maturational deficiencies” or intraspecies genetic variation such as color blindness, there is inattentional ignorance when higher-level processes affect awareness at lower levels (Rock et al. 1992, Chabris & Simons 2011). Above the level of competence for psychophysics the experimenter’s perceptions cannot be presumed any more than they can be across species.

Bill’s third response about ‘obvious’ observations proposes developmental research and cross-species research. (He didn’t mention the Plooijs’ research into both, which was informed by B:CP; Frans got his Ph.D. the following year, 1980, but they had been in communication.) These are wide open fields of research.

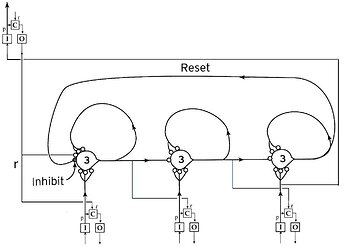

He proposes representing a simple program as a graph that has conditions associated with the nodes which determine which vertex (‘path’) a moving indicator (‘small circle’) should follow: “if condition 1, take the counterclockwise turn; if 2, go straight, and if 3 take the clockwise turn”. The control system that invokes the program “is continually perceiving whether the program is running correctly, and if not, correcting the errors.”

I believe that the only way that it can do this is by running the program in imagination and comparing the imagined output at each step with the observed output on the screen. Do you have another suggestion?

If this is so, you must model the program as well as the monitoring of whether or not it is running correctly. A computer simulation of what the subject is doing is as follows: Run the same program twice in parallel–one correctly, the other with random errors. At each program step compare the output of the former with the observed output of the latter and if they differ send a signal. Act on that signal to resume the correct program at the next step. (“Next step” is simple for your iterated loop, a bit more involved in Bill’s graph.)

Bill does not say what the errors might be or how the invoking system might correct them. Resuming the program after the last correctly-executed step may be the simplest possibility, but it is not the only possibility. For example, there might be a disturbance to control of input that is required for that step, or there might be a bug in the program and the remedy is for a superordinate system (perhaps the same one) to resume a process that created the program in the first place.

Your demo and Bill’s proposed demo fail to model human behavior in an important respect: in neither case does the invoking of the program have any motivation (other than being responsive to the experimenter or the demo prompts), nor can it. It just meanders on without delivering any conclusion.

In a living control system, the system that invokes a program does so because it requires the output of the program in its perceptual input.

Bill emphasizes “Notice that this program does not handle symbols!” In B:CP (pp. 166-167), Bill had already rejected the assumption of Cognitive Science that the brain does ‘information processing’ with symbol-manipulating rules operating upon a ‘cognitive map’. Whatever the subject of an experiment or demo is doing when we observe them controlling whether or not a computer program is running, the computer code for that program does not represent it. (See calculations of length, area, and volume, above.)

He goes on to laud Miller, Galanter, & Pribram Plans and the structure of behavior, to say that it

… constitutes a starting point for the investigation of our seventh-order systems. Those whose interest is in giving content to this model would do well to begin with Plans, for it is as close to a textbook of seventh-order behavior as now exists.

(The 7th order of 1973 later became the 9th order.) He stops with saying that B:CP is “not concerned … with amassing examples of specific behaviors,” but since you have called repeatedly for exactly that it is not too late for you to start mining Miller et al. for examples to model. I’m confident you have a copy. For others, used copies are available at abebooks.com, and a PDF may be downloaded free from Z-Library.

References:

Chabris, C., & Simons, D. (2011). The invisible gorilla. HarperCollins.

Rock, I.; Linnet, C. M.; Grant, P. I.; Mack, A. (1992). “Perception without Attention: Results of a new method”. Cognitive Psychology. 24 (4): 502–534. doi:10.1016/0010-0285(92)90017-v