···

[From Rupert Young 2012.11.06 23.30 UT]

Bill Powers (2012.11.05.1355 MST)

BT: If you figure out for yourself what is wrong, you will never

forget it.

If I figure it out and tell you, you will just memorize an action,

which

will probably be the wrong one next time.

RY: That makes sense, and I appreciate your guidance on this

matter; though you may be overestimating my ability to work it

out! Btw, are you aware of what is wrong, or are you just

suggesting approaches for investigation?

BT: To make sense of this system you must * understand how each

part of it

works* , not just enter equations and run the program. Put the

final

model together piece by piece and don’t move on to the next piece

until

you understand the previous pieces.

RY: I was trying to do something like that (the third and fourth

sheets of the spreadsheet had a control system using a constant

disturbance and a plot of ln values), but kept getting stuck.

However, I’ve had another go along the lines you suggested, using

the third sheet (ln int) in the attached.

If a = b = 3 then ratio = 1 and ln(ratio) = 0

If a = 3 and b = 9 then ratio = 3 and ln(ratio) = 1.099

If a = 3 and b = 27 then ratio = 9 and ln(ratio) = 2.197

If a = 3 and b = 81 then ratio = 27 and ln(ratio) = 3.296

I note that as the ratio increases by a multiple of 3 then the

ln(ratio) increases by 1.099, which is also ln(3).

Closing the loop and using a pure integral and starting with a = b

= 3,

if reference is 0.1 the output slowly approaches the target of 30,

as the reference increases from 0.1 to 10 the output approaches

its target more quickly,

around 12 the output oscillates around the target,

13 and higher and the system becomes out of control

So here I run into an impasse again, as normally, with a

“standard” control system, increasing the reference would just

result in a longer time for the system to become under control

(wouldn’t it?).

Though row 4 (highlighted in sheet "ln int") doesn't look right.

The starting output is 0.714 and the target, that would bring the

ratio to the reference 13, is 0.23, with a gain of -0.5 one would

expect that g*e would represent half the amount between 0.714 and

0.23, but in this case it is 0.565, which means it overshoots. So

is it the case then that the error, and, therefore, the change

that we are applying to the output is not in the right units?

It's late, so I'll sleep on it; though any hints welcome.

Regards,

Rupert

On 05/11/2012 21:37, Bill Powers wrote:

[From Bill Powers (2012.11.05.1355 MST)]

Rupert Young 2012.11.05 19.00 UT

More on this later.

RY: I have used the

leaky

integrator, I think, o = o + s (g*e-o), what is the pure

integrator?

It's just o = o + g*e*dt.

There should be a dt in the leaky integrator version, too,

defining the

length of time represented by one iteration of the program.

However, using a sine

function

(see sheet LN Perc Sine) for “b” control doesn’t appear to

work. The values of the sine function are between 0 and 1. With

a ratio

reference of 0.001 the output should come to 1000. I have tried

changing

the values of slow and gain, but can’t get seem to get this to

work. Or

maybe control is successful but is lagging behind the sine

function.

Perhaps there is a flaw in using values between 0 and 1 for

“b”.

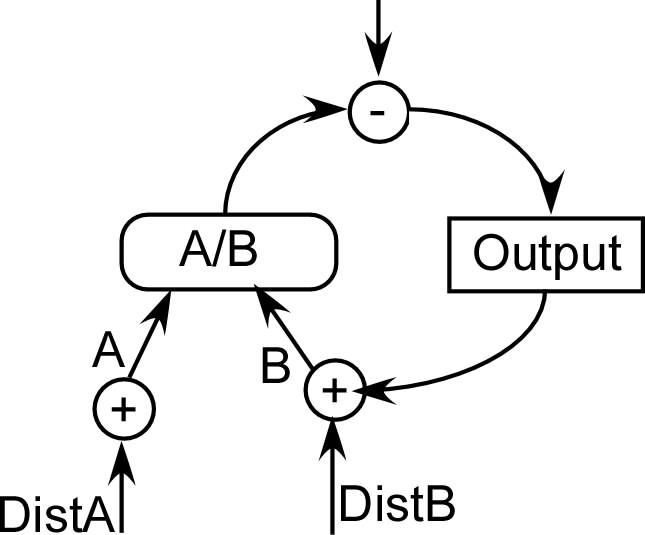

The trick here is to look at the behavior of each variable and see

if it

makes sense. First, set the two inputs to values with a ratio

somewhere

near 1 (say, between 0.1 and 10.0) and see what the perceptual

signal is.

Vary one of the inputs and see how the perceptual signal changes.

This

will show you the range of values of the perceptual signal. Set

the

reference signal somewhere within this range, and look at the

error

signal. Then close the loop and with the disturbing variable

constant,

see how the output changes the other input variable and whether

that

variable approaches the correct value. You can adjust dt to make

the

approach to the final values happen in a reasonable time – not

too fast,

not too slow. You may find at this stage that a gain is much too

large,

or much too small. You may find that a sign is wrong so the error

gets

larger instead of smaller as each dt goes by.

To make sense of this system you must * understand how each part

of it

works* , not just enter equations and run the program. Put the

final

model together piece by piece and don’t move on to the next piece

until

you understand the previous pieces. By “understand” I mean to

figure out what you should see, then check to see if that is what

happens. If the inputs are a = 10 and b = 20, then what should the

perceptual signal be? Is that what it is? Then subtract the

perception

from the reference and see what the error is. Then after one

iteration

check the error again and see if it’s changing the right way and

by a

reasonable amount. And so on.

The first time you do this it can get pretty tedious, but you will

quickly get better at it. You’ll soon be able to do most of it in

your

head without even running the program.

If you figure out for yourself what is wrong, you will never

forget it.

If I figure it out and tell you, you will just memorize an action,

which

will probably be the wrong one next time.

Best,

Bill P.