Your program code says “if the shape is circle, the next color is blue; else, the next color is red”.

The subject can tell when the program is running and when it is not by controlling two sequence perceptions in parallel:

- circle followed by blue object

- square followed by red object

Your demo can’t tell whether I’m doing that or controlling a contingency as specified by your pseudocode (and your computer code). Nor could you tell which I am doing, if you were observing me, except to believe my testimony. I reported all this in February 2018 (links earlier in this topic).

Each sequence requires control at the configuration level followed immediately by control at the sensation level. I found that integrating these different levels into a sequence perception, and maintaining control of the two disparate sequences concurrently, resulted in hesitations and errors. This is probably related to how sensations are perceptual inputs to configurations. It accounts for the difference in speed of performance.

Reciting the pseudocode to determine whether or not to press the spacebar was far slower. It may be that long practice would create a perceptual input function that would enable efficient control. But how reorganization resolved the problem in neural structures would still be unknown. Reorganization might very well resolve it as concurrent control of two sequence perceptions. We have no way of knowing by means presently available to us.

What is of interest to me, and I believe what is the reason for Hugo’s inquiries about propositional logic, is not a perception whether or not a computer program is running (which is control by a system above the Program level), but rather how a living control system does what we may perceive as controlling at the Program level. This is what the phrase ‘program control’ means to me.

‘Program control’ in your sense of the term is done by a higher-level system recognizing when a program has stopped and acting to restart it. This is very different from program control at a Program level of choice points and contingencies. (If the analogy to computer programs and mathematical logic is at all serious, much more than choice points and contingencies is involved.)

Often, we do not know what the output of a program should look like. That’s why we have programs, to produce solutions to problems that are not known in advance. Even when we do know what the desired output looks like, evaluation of output is a separate step. Even when a recipe is a network of contingencies (many if not most are sequences without choice points; the selection of alternative ingredients is done before starting) the perception of when it is completed and the perception of whether or not it has delivered the desired results are entirely distinct. Yes, the cook moves immediately to taste the broth, and may then make adjustments (usually straightforward perceptual control, “needs more salt”), but the last step of the recipe has been completed.

All that a system that ‘calls’ a program knows is whether or not the program has delivered its output. Verifying that the output is correct, and behind that verifying that the program is correct, is a different problem. Your demo proposes that the system that does ‘program control’ in your sense of the term (i.e. recognizing when a program has stopped and acting to restart it) knows what the output should look like. That scenario might happen in nature, but I’m not immediately aware of an example. Maybe you are?

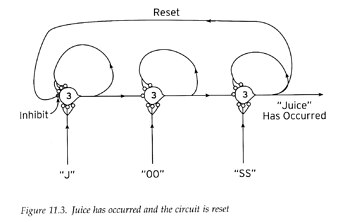

I think it’s much more likely that the program structure sustains an ‘in progress’ signal back to the calling system, and stops doing so when the program has completed. I think this is probably how sequence control works. Bill’s diagram for recognizing sequences issues a ‘reset’ signal on completion, which goes back and stops the millworks.

He doesn’t investigate how a higher-level system would send a signal to initiate control of the sequence; I’ve essayed this at places linked to earlier in this topic. However it is done, that same ‘reset’ signal would branch up as a ‘sequence completed’ signal.

A program is a particular arrangement and interconnection of sequences and sub-sequences. If the perceptual state resulting from a sequence is connected to the input functions of two alternative sequences together with a ‘continue’ signal from a calling system at a higher level, that is a choice point which is decided by the match of that perceptual state to one input function or the other.